Why multi-step AI workflows need a new language

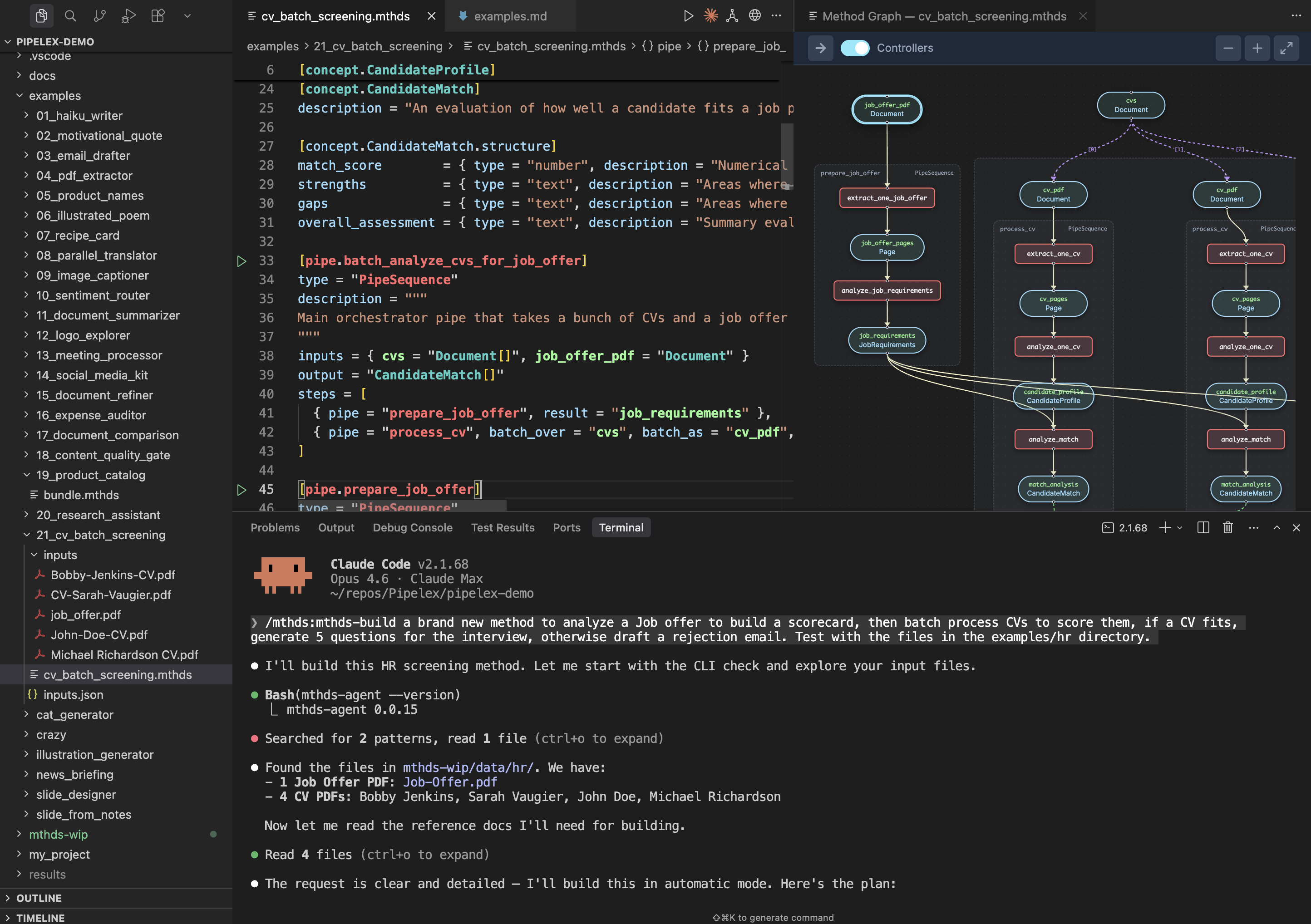

TL;DR: MTHDS (pronounced "methods") is an open, typed DSL for defining multi-step AI workflows. Methods are executable: install one, run it from your terminal, pipe it into another. Think of it as turning LLM workflows into proper UNIX tools. Pipelex is the MIT-licensed runtime. We ship it as a Claude Code plugin so agents can author, validate, and run methods without leaving your terminal.

The problem: AI workflows are trapped

If you build LLM pipelines in production, you've hit this:

- Code-first (Python/TS + LangGraph/Mastra/etc.) → powerful, but business logic drowns in programming code: it's hard for non-engineers to review.

- Prompt-first (CLAUDE.md / "do X then Y") → readable, but fragile. No types, no composability, no reproducibility.

Neither gives you something you can run, pipe, or version the way you would a real tool.

The idea: AI methods as executable, composable tools

A method is a typed AI transformation: given inputs of type X, produce output of type Y, through explicit steps (extraction → analysis → routing → generation).

The key insight: methods are executable. A .mthds file isn't just a spec — it's a runnable artifact: CLI / API / MCP and a Claude Code plugin.

This is a "devtool for agents":

- methods are the unit of work that agents produce, share, and execute

- a devtool with methods come with semantic code coloring, formatting, linting, schema validation, testing...

It's a Claude Code plugin

We ship MTHDS as a Claude Code plugin — the primary way to author and run methods. Inside Claude Code, the agent can:

- Build methods from natural language: "Screen CVs against a job offer, score each candidate"

- Validate them: structural checks, type checking, dry runs with mock inputs

- Run them: real execution with AI inference or dry run

- Fix and iterate on them

- Generate input templates and synthetic test data

# install our plugin into Claude Code:

claude

/plugin marketplace add mthds-ai/mthds-plugins

/plugin install mthds@mthds-plugins

/reload-plugins

If that doesn't work, exit Claude Code and reopen it:

/exit

claude

The workflow: a domain expert describes what they want. The agent produces the typed .mthds file, validates it, runs it, iterates. The method becomes a reusable, versioned tool — not a one-off prompt chain buried in a conversation.

A concrete example

A CV screening method that extracts pages, builds a scorecard, and evaluates each CV:

This is fully executable. It takes real inputs and returns typed output. Validation is structural and conceptual: broken wiring, wrong types, missing inputs, unreferenced variables and logic errors are caught before any model call.

Why types matter: conceptual, not just structural

MTHDS types aren't string and int. They're conceptual: NonCompeteClause refines ContractClause refines Text. A pipe expecting ContractClause accepts NonCompeteClause — subtyping carries domain meaning.

Feed a CandidateProfile into a pipe expecting JobDescription? Both are text. The LLM runs fine and gives you confidently wrong output. Conceptual typing catches that before any model call: InputError: pipe 'score_cv' expects JobDescription, got CandidateProfile.

Pipes compose like UNIX tools

Operator pipes do transformations:

PipeLLM— LLM call with structured outputPipeExtract— document → pages (OCR)- plus image-gen, web-search, arbitrary functions

Controller pipes orchestrate:

PipeSequence— step-by-stepPipeParallel— concurrent branchesPipeCondition— routingPipeBatch— map over a list

Same artifact, same type system, same validation. No separate orchestration language.

And because methods have typed inputs and outputs, they compose at the CLI level too — pipe the output of one method into the next, just like grep | sort | uniq.

Packages: version, share, depend

When a method outgrows a single file, it becomes a package:

METHODS.toml→ manifest (identity, exports, dependencies)methods.lock→ resolved versions + integrity hashes.mthdsfiles → the actual methods

Install from the hub, pin versions, resolve dependencies. Standard package management — nothing exotic.

What Pipelex adds as the runtime

- Python package / CLI / API / MCP — 4 ways to execute

- Observability — runs are traced as directed graphs (renderable as Mermaid/ReactFlow). What ran, what produced each intermediate, where branching happened. Compatible with Open Telemetry, Langfuse, PostHog...

- Tooling — formatting, linting, dry-run validation with mock inputs

Pipelex's stance: agents are great at intent and iteration; production needs repeatability and observability. With MTHDS, Pipelex brings deterministic orchestration. You still get LLM creativity but MTHDS makes AI workflows explicit, validatable, composable, portable, executable, and debuggable.

Open standard, MIT-licensed

We don't want methods trapped in a proprietary GUI or a framework-specific IR.

- MTHDS spec

- MTHDS docs

- MTHDS hub

- Pipelex runtime

- Claude Code plugin

- VS Code / Cursor extension

- Discord

If you're building multi-step AI workflows:

- What breaks most often for you?

- Would executable, typed methods help — or just add ceremony?

- What would you pipe together if methods were real CLI tools?